$700 for a 64 GB iPhone 6s? Seemed too good to be true, but that’s what the mail in my inbox said. It didn’t look spammy, so, I clicked on the link, and went to the gadget website. Everything looked legitimate with good user reviews. Great! So, I clicked “buy”, and… the website just went blank. It refused to load any more. I went back a page, but the site was still down.

Suddenly, I got a bit of cold feet. I’ve never done transaction with this site before. Is it reliable? What happens if the site went down during payment? Better be safe than sorry! I closed the browser, and let it go.

My shopping behavior is not unique. When it comes to parting one’s hard earned cash, most customers are finicky. Website uptime says a lot about business reliability

When it comes to spending one’s hard earned cash, most customers are demanding about website reliability, and site uptime is the most visible indicators of a reliable business. To cater to this demand for high uptime, several hosting providers now offer “Mission critical hosting” or “high availability hosting”, where the Service Level Agreement guarantees at least 99.9% server uptime.

Recently, a hosting provider contacted us to build and maintain a hosting infrastructure that could guarantee 99.95% uptime. The hosting provider was using redundant hardware (like RAID disks, dual power units, etc) for fault tolerance, but still faced a couple of issues where extended downtimes were caused due to system errors, network failures, etc. This resulted in more than 22 mins downtime per month (leading to SLA breach of 99.95% uptime).

We recommended using a cluster of virtual machines with auto-failover, so that even if one of the machines go down, the others in the cluster can bring the virtual machines back online within a couple of minutes. There were several proprietary virtualization solutions that had this capability, but since the hosting provider wanted to keep the costs low, we recommended a server virtualization solution called oVirt.

oVirt is the open source version of Red Hat Enterprise Virtualization solution, and is considered a reliable virtualization platform. Today, we’ll go through how high availability through auto-failover was configured using oVirt server virtualization.

High availability in oVirt server virtualization

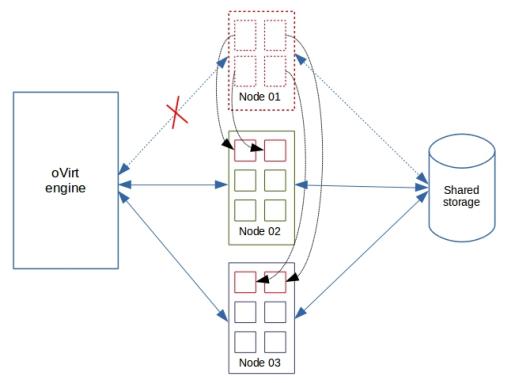

The oVirt system groups servers into clusters. A central server called oVirt engine monitors each server in a cluster, and in case one server (say Node01) becomes unreachable, all virtual machines (VMs) in that server will be equally distributed to other servers in the cluster. Users of those VMs would notice a small break in services, but everything would be back online in a couple of minutes.

This works because all operating system and application information of VMs are stored in a shared storage space accessible by all servers. As you can see in the image, “Node 01”, “Node 02” and “Node 03” access the same “Shared storage”. So, VMs in “Node 01” can work equally well in “Node 02” or “Node 03” as long as the “Shared storage” is accessible from those servers.

When the host “Node 01” fails, the VMs in “Node 01” is automatically migrated to “Node 02” and “Node 03”.

For high availability to work in oVirt, there are a few pre-conditions to be met. This includes a highly available shared storage device, power management on all servers, and surplus resources on all servers to accommodate VMs from other servers. Let’s take a look at them one by one.

Configuring shared storage

A shared storage is at the core of a highly available system. Unlike traditional dedicated servers, virtual machines (aka VMs) in a cluster store their operating system and applications in an external, high-speed storage device that sits outside the servers. So, even if a VM’s host server goes down, the storage device remains online. It is then just a matter of starting the VM in another host server to bring the services back online.

In the server virtualization system we implemented, one of the shared storage devices we used was a RAID 10 array. All servers in the cluster had access to this storage device. This made it possible for all VMs using that shared device to run off any host server in the cluster. We chose a high-speed, redundant storage device such as RAID 10 array for this purpose because high availability would work only if the storage device remains online at all times.

Configuring power management on hosts

As mentioned earlier, oVirt is centrally managed by a server called oVirt engine. It is the oVirt engine’s job to detect if a server in a cluster has gone down, and initiate a VM transfer.

Now, consider a scenario where the network cable between oVirt engine and a server is cut, but the server is still connected to the shared storage device. The oVirt engine would think that the server is offline and create clones of VMs in that server on other servers. This will essentially corrupt the data of all the VMs hosted on that cloud server.

To avoid this situation, oVirt REQUIRES that power management be accessible on all servers in a cluster for high availability to function. When oVirt detects a server to be offline, first it’ll try to shutdown the server by turning off the power. ONLY IF the power shutdown is a success, will it attempt to put the VMs on another server.

So, before we enabled high availability in VMs, we configured power management for all servers in the cluster. It is done by navigating to “System” –> “Data Centers” –> “Clusters” –> “Hosts” –> “Edit”. The power management fields were filled in as shown here:

Planning surplus resources to accommodate fail-over VMs

Let’s say there are 25 VMs in a server called “Node 01”. These VMs would be allocated CPU and Memory resources that are carved from “Node 01’s” CPU and Memory capability. Now, let’s say the 25 VMs are allocated 50 GB of memory and 30 CPU cores in total. Then, for high availability to work, the rest of the servers in the cluster should have a SURPLUS capability of 50 GB RAM and CPU cycles equaling 30 CPU cores.

For example, in the vistualization system we implemented, we started off with 3 servers which had 32 core CPUs and 64 GB RAM memory. The maximum resource that we could allocate on one server was 45 GB memory and 25 CPUs (with a bit of overselling). This allocation policy left ample space for VMs from one failed server to be evenly distributed over the other two.

Enabling high availability

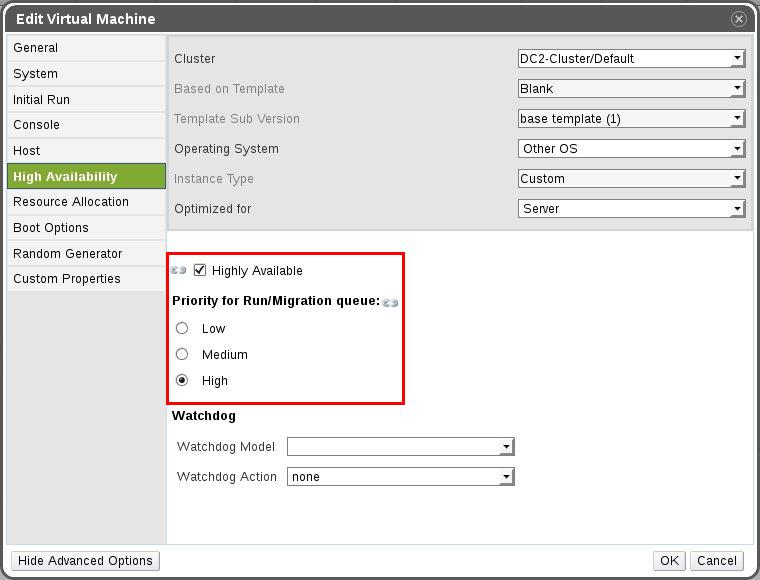

Once the shared storage, power management and surplus resource planning was completed in the oVirt system, the VMs were then ready to be enabled with “High Availability” fail-overs.

To do this, we enabled the “Highly Available” option under “System” –> “Data Centers” –> “Clusters” –> “VMs” –> “Edit” –> “High Availability”. Based on the hosting plan, the priority of fail-overs were selected in that interface. In case of a server failure, a VM marked as “High” priority would be started first, thereby minimizing downtime.

oVirt virtualization VM configuration

High availability is now an essential feature of any hosting solution. Here we’ve covered how high availability was implemented for an oVirt server virtualization system. Bobcares helps cloud providers, data centers and web hosts deliver industry standard IaaS services through custom configuration and preventive maintenance of virtualization systems.

Bobcares helps web hosts, cloud providers and data centers deliver reliable, responsive hosting services through 24/7 technical support and infrastructure management.